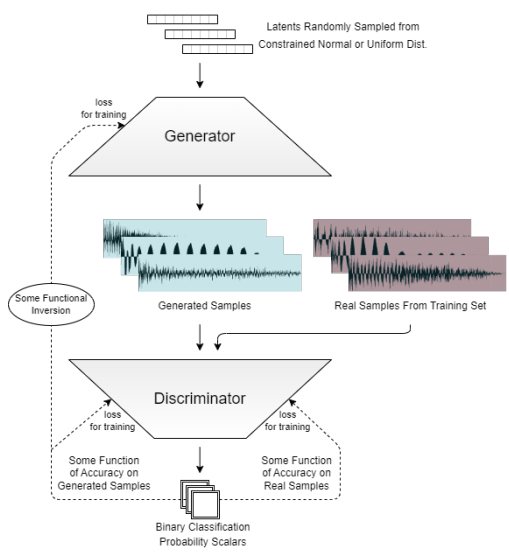

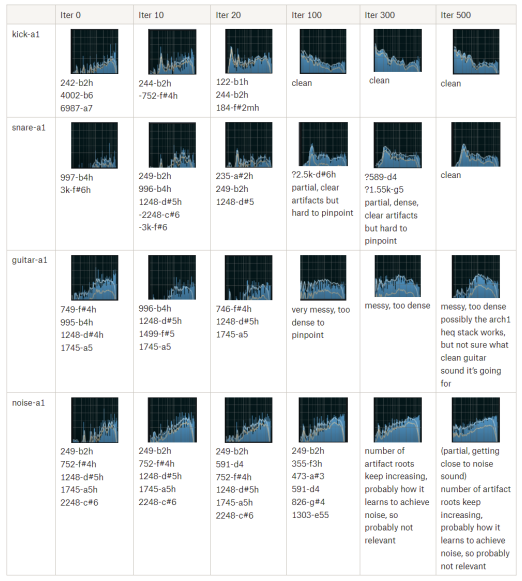

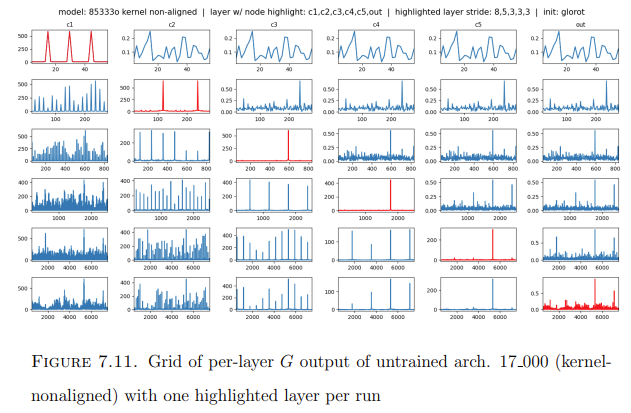

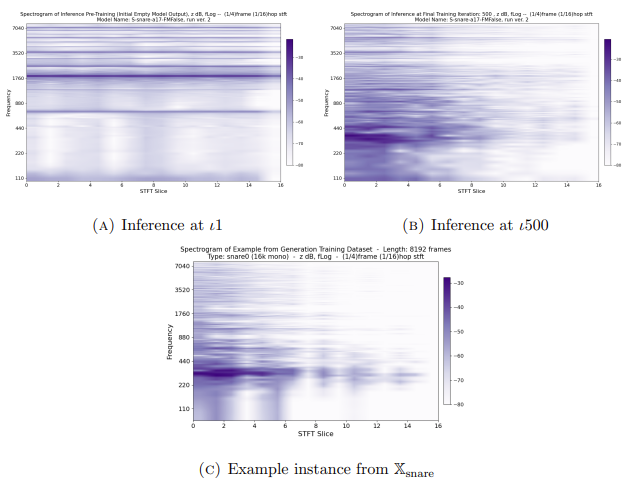

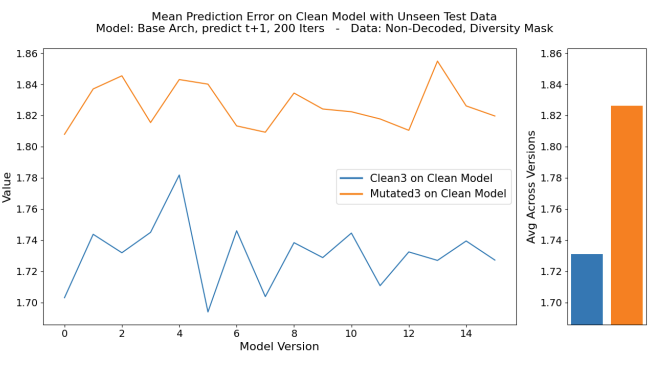

Implemented over 150 GAN architectures for instrument & sound effect sample generation, for exhaustive granular controlled experimental comparison, representing the largest multivariate analysis of structural model variations in this domain to date. This included the structurally novel PrismGAN & SBIGAN models, which both quantifiably succeed at eliminating spectral artifacts in inference. Analysis was performed along 79 metrics, including several novel spectral comparison methods for audio inference evaluation.

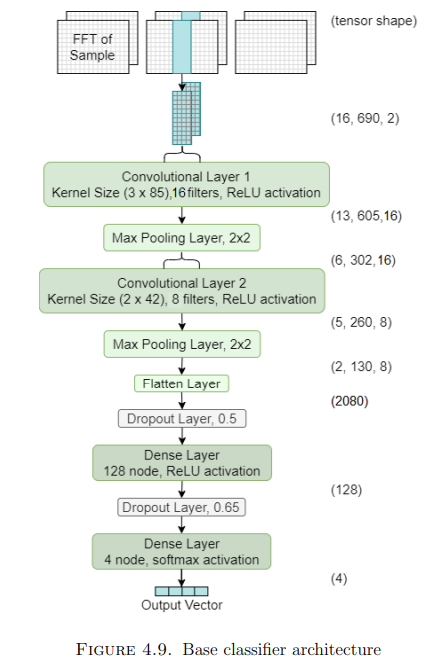

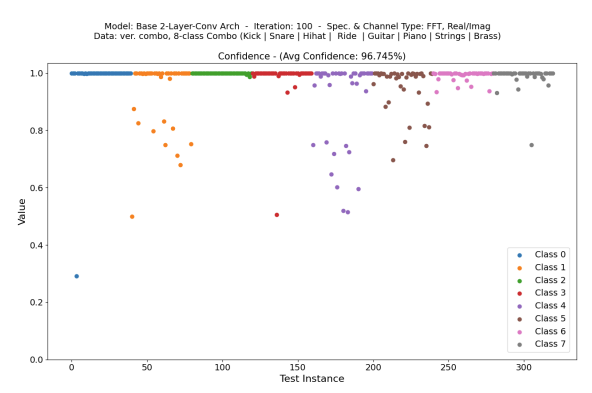

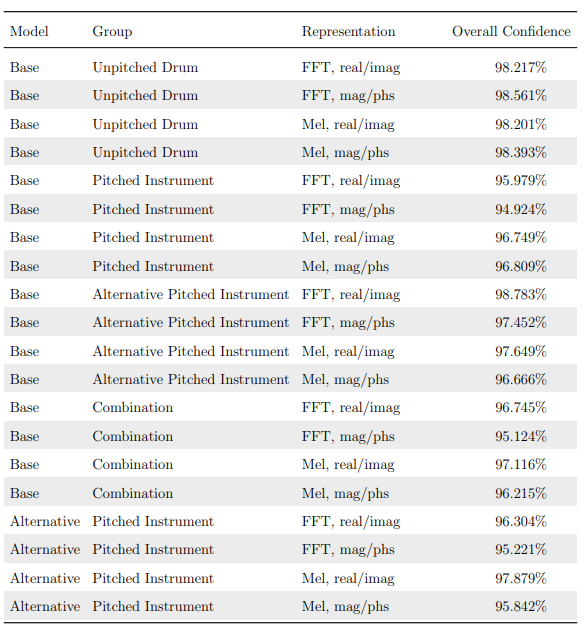

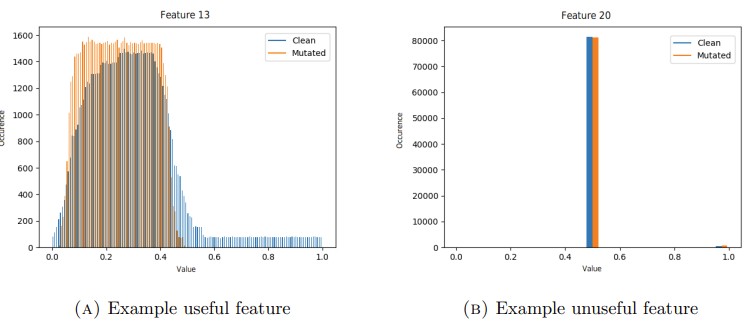

Compact CNN for supervised raw audio sample classification by instrument type. Achieves over 95% accuracy on any class combination.

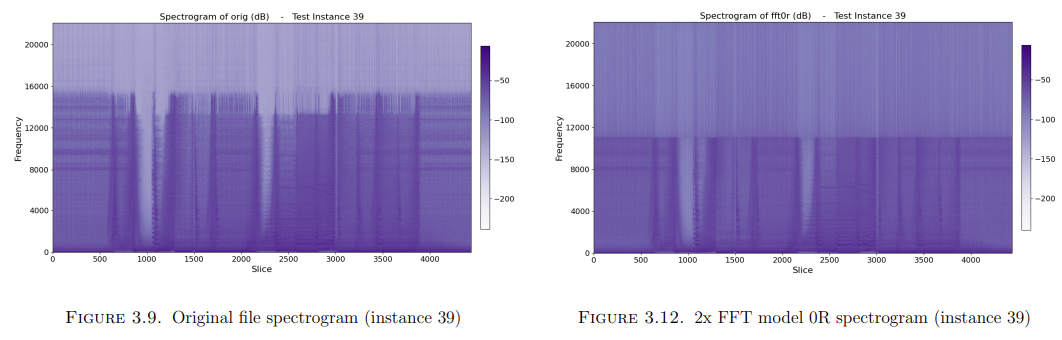

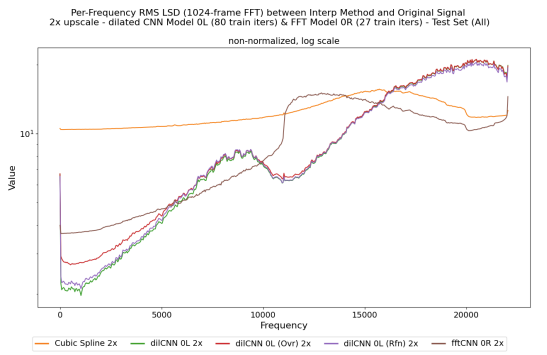

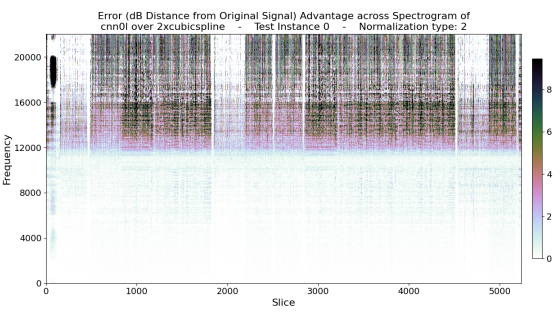

Dilated CNN for raw audio music super-resolution. Consistently outperforms baseline in average 2x case according to log-spectral distance metric, with increased advantage with repeated upscaling.

Dilated CNN for detecting the presence of sparse hidden messages injected into online game network traffic by a system resistant to statistical analysis methods. Requires no prior knowledge of network protocol and needs only a partial play session (less than an hour) for reasonable confidence.

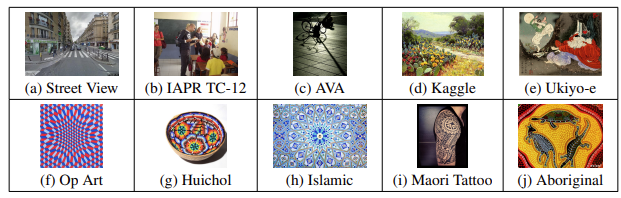

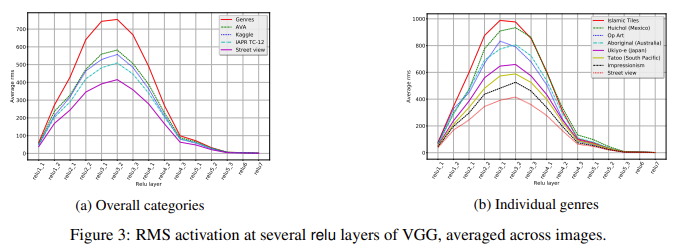

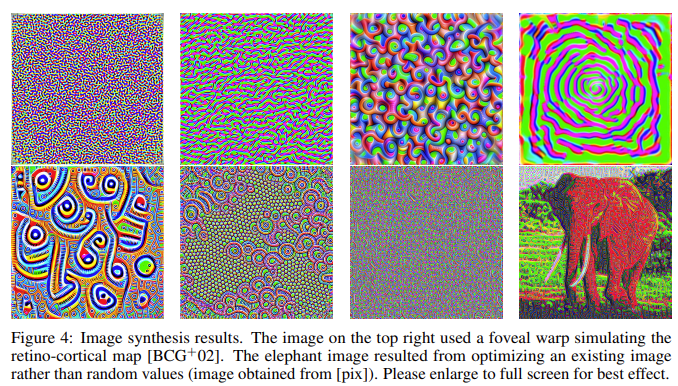

Deep CNN for binary classification of images as human-made art, trained on large dataset of human-made artworks along with semi-curated scraped non-art images. The trained network was used to iteratively optimize an image toward maximizing activations, with the idea of visualizing the network’s concept of what makes an image qualify as art. Proposed and implemented intermediate random transform layers during training and image synthesis, which vastly increased the network’s perceptual range (over 50% improvement in large test set classification accuracy) without needing any additional data.

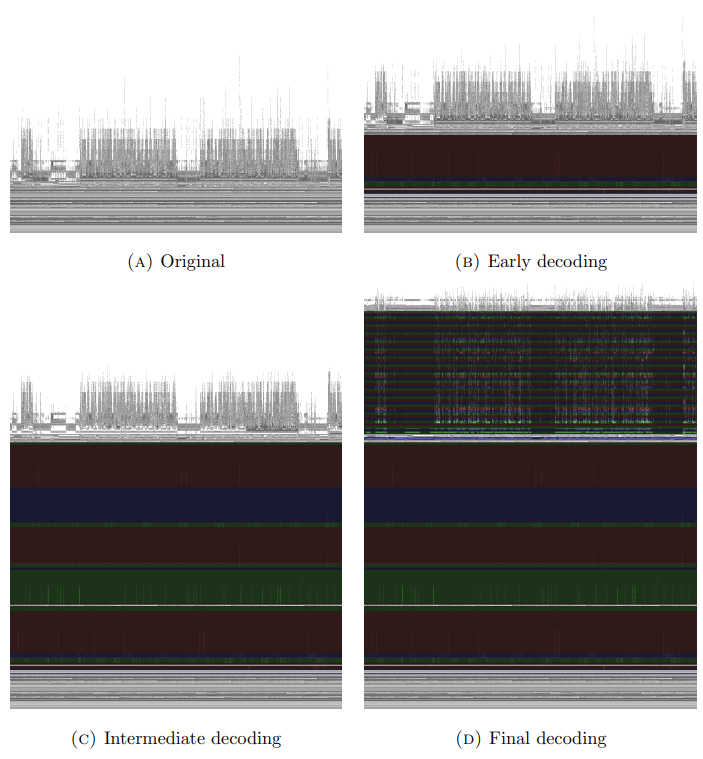

Neural network hallucination through maximizing activations of vision model trained on art databases: